Google is testing an experimental AI search tool on YouTube

- Blog Industry New Startup Trending News

- Entrepreneurs Story

- April 29, 2026

- 323

- 14 minutes read

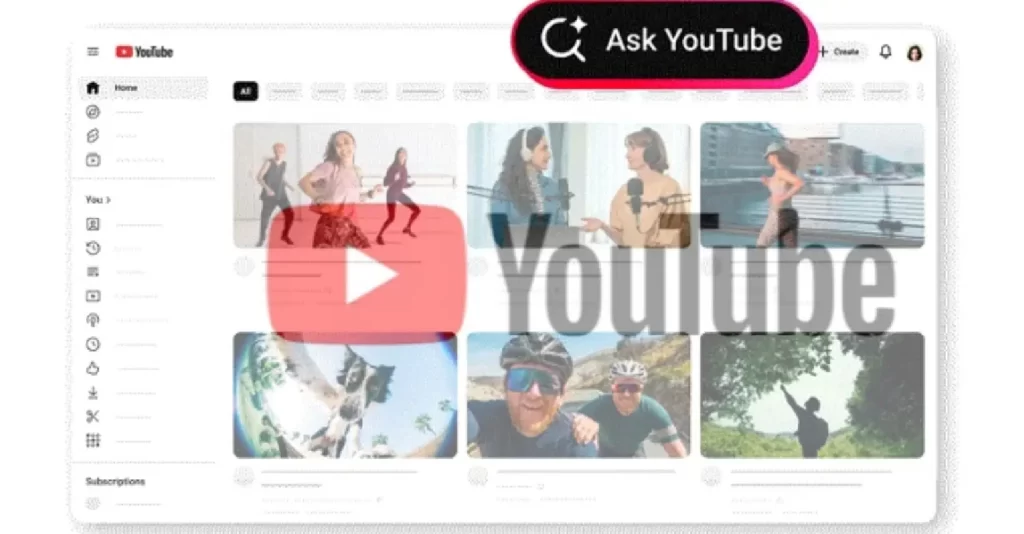

Google is rolling out a test for an experimental AI search tool on YouTube, a big step forward in the company’s push to integrate generative artificial intelligence throughout its ecosystem. This pilot program represents a big leap from traditional metadata-driven content retrieval to a native, conversational and intent-based discovery model. This new experimental AI search feature is powered by Google’s cutting-edge Large Language Models (LLMs) and is aimed at increasing user engagement by providing deep intelligence into video content. The search capability is beyond simple keyword matching, but understands the semantic nuance of the spoken and visual elements in a video. It’s the next step in the evolution of how we engage with digital content. Instead of just passively serving up results, platforms will actively help you synthesize information.

The Development of Content Discovery

For over twenty years, digital content platforms have depended on a symbiotic relationship between creators providing metadata (titles, descriptions, tags) and users providing keywords. This model works, but it is intentionally constrained, particularly for long-form video. Users looking for specific information in a thirty-minute tutorial or an in-depth documentary often have to scrub through the timeline manually, or rely on timestamps created by the creator.

Google’s choice to test an experimental AI search tool on YouTube is a direct response to this friction point. YouTube is applying principles of conversational AI, similar to the technologies found in Google’s own search generative experiences, to make its discovery engine more intuitive, dynamic and efficient.

How the New Experimental AI Search Tool on YouTube Works: Analyzing the Feature

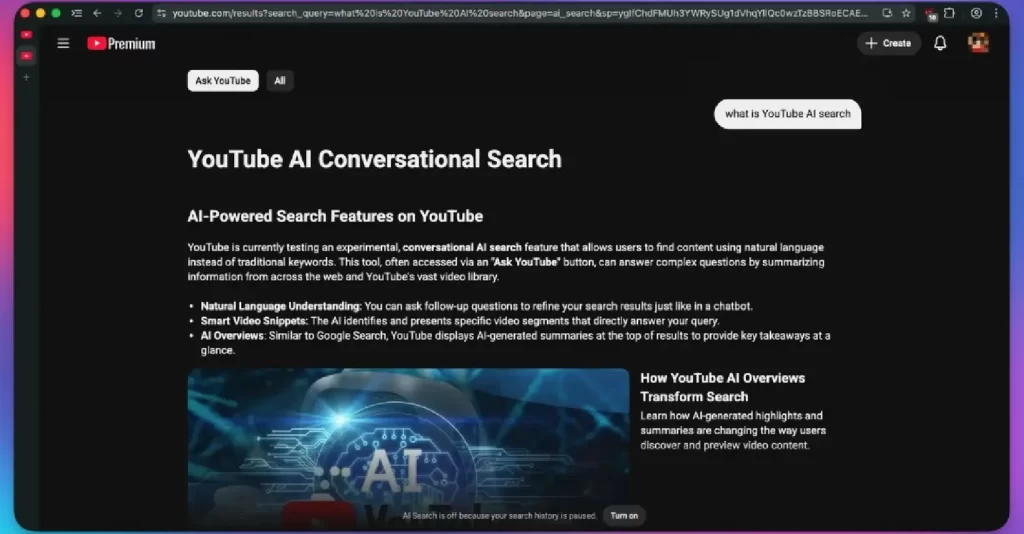

The new experimental Global AI search feature is not a surface-level chatbot, but a deeply integrated retrieval mechanism. It works by analyzing transcripts and, presumably, visual semantics of videos in real-time to answer complex user queries.

- Conversational Q&A and deep video intelligence

The main feature of this experiment is that users will be able to ‘Ask’ the platform about a particular video they are watching. The experimental AI search tool on YouTube lets you have a dialectic with the current content instead of looking for another video.

For example, if a user is watching a complicated technical lecture, they might ask, “What was the definition of polymorphic encryption?” The AI tool will give an answer based purely on the transcript of that video and serve it up to the user in real-time, without forcing the user to stop their viewing experience or scrub back through the timeline.

- Effective Data Summarization and Synthesis

In addition to granular Q&A, the tool also provides broad summarization capabilities. For long-form content, the AI can produce short summaries that point out key segments and arguments. It is particularly useful in the age of information overload, enabling professionals, students and casual viewers to quickly assess the relevance of a video before spending the time to watch it in full. It’s a semantic filter that basically displays the “highlights” based on user intent, not some predefined creator formula.

Strategic Implications for the YouTube Ecosystem

The deployment of a test AI search feature on YouTube has far-reaching consequences for all participants in the ecosystem: users, creators, and advertisers.

- Effect on user engagement and retention

The new experimental AI search feature is intended to reduce information asymmetry, from a user point of view. Users’ satisfaction increases if they can quickly locate precise information. In fact, YouTube probably expects an increase in session length and a decrease in bounce rates due to frustration searching for a specific answer by keeping users engaged in the video player for Q&A.

- The Change of the Content Creators: From Metadata to Semantics

This development marks a paradigm shift for content creators. Traditional SEO (keywords, engaging thumbnails) will still be relevant for initial discoverability but the semantic intelligence of LLMs means the actual spoken content in the video itself is now a key factor in indexing.

As a result, creators may need to adapt, making sure their scripts are clear, structured and comprehensive. “Clickbait” titles that misrepresent the content of a video could face penalties not only from user behavior but from AI systems that are now able to “read” the true intent of the video. On the flip side, high-quality, educational and information-dense content will likely see a boost in relevance, as AI can now efficiently surface these videos for very niche or complex queries.

Scope and Availability Current

Much like any of Google’s high-impact pilots, the experimental AI search tool on YouTube is only available in a limited environment for now. It’s currently being trialled with a small number of users, mainly YouTube Premium subscribers in the United States, and supports English-language queries on mobile at launch. The controlled rollout allows Google to collect the performance data it needs, improve the accuracy of the LLM’s responses and mitigate potential issues like hallucinations or inaccurate summaries before a potential global launch.

Conclusion: The Future of Interactive Video

Google’s test of an AI search tool on YouTube is more than a product feature — it’s a sign of how digital content consumption is changing. It turns video from a passive medium into an interactive, conversational resource. Merge generative AI with the world’s largest video archive, and this union will revolutionize how we learn, get entertained, and find information, cementing Google’s position at the forefront of the AI-powered semantic web.

Frequently Asked Questions (FAQs)

1. What is the experimental AI search tool on YouTube?

Google is testing an experimental AI chat feature on YouTube, a conversational AI feature native to the platform. This enables the user to ask questions about the content of the video they are watching and to receive a synthesized answer derived from the transcript of the video. It can also condense long videos into key segments.

2. What advantage do I get from the YouTube conversational AI?

The main advantage of the new experimental AI search feature is that it is efficient. Instead of sifting through long videos to find the info you are looking for, just ask the AI. It enhances discovery with natural language queries and instant synthesis, saving you time while learning from tutorials, lectures or documentaries.

3. Who has access to the experimental AI search feature on YouTube now?

The new experimental AI search feature is very limited right now in terms of availability. It’s only available to a small group of YouTube Premium subscribers in the U.S. who have opted into experimental features. Currently, it’s available in English on the YouTube mobile app.

4. Will this new AI search feature work on all kinds of videos?

While the technology itself is solid, the experimental AI search tool on YouTube is only as good as the transcripts it has to work with. For best results use videos with good audio and clear captions (from the creator or auto-generated). It works very well for education, instruction and information-dense content.

5. Am I able to ask questions about content that isn’t in the video I am watching?

No, the experimental AI search feature is in its current phase meant to be in conjunction with the current viewing experience. It does all its semantic analysis on the context of the video you are watching, so it can give accurate Q&A and summaries that relate only to the content of that video.